I looked at the order in which LZ258 explores the tree. I will ignore tenuki moves for simplicity and translate into human-understandable terms and diagrams.

The initial position is the following:

$$Bc Initial position

$$ ----------------------------+

$$ . . . . . . . . . . . . . . |

$$ O . . X O O O . . 1 O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bc Initial position

$$ ----------------------------+

$$ . . . . . . . . . . . . . . |

$$ O . . X O O O . . 1 O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

LZ examines a first variation

$$Bc Variation 1.1: not much damage

$$ ----------------------------+

$$ . . . . . . . . 2 6 . . . . |

$$ O . . X O O O 4 3 1 O O O . |

$$ . . . X X X X O O 5 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bc Variation 1.1: not much damage

$$ ----------------------------+

$$ . . . . . . . . 2 6 . . . . |

$$ O . . X O O O 4 3 1 O O O . |

$$ . . . X X X X O O 5 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Let's try

at

$$Bc Variation 1.2: not much damage either

$$ ----------------------------+

$$ . . . . . . . . 2 4 . . . . |

$$ O . . X O O O . . 1 O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bc Variation 1.2: not much damage either

$$ ----------------------------+

$$ . . . . . . . . 2 4 . . . . |

$$ O . . X O O O . . 1 O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Let's try

at

$$Bc Variation 1.3.1. White is split but alive on both sides

$$ ----------------------------+

$$ . . . . . . . 6 2 3 . . . . |

$$ O . . X O O O . 4 1 O O O . |

$$ . . . X X X X O O 5 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bc Variation 1.3.1. White is split but alive on both sides

$$ ----------------------------+

$$ . . . . . . . 6 2 3 . . . . |

$$ O . . X O O O . 4 1 O O O . |

$$ . . . X X X X O O 5 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

That's better than variations 1.1 and 1.2 so we can discard them and explore further the subtree starting at

to see if we can do even better. The only other reasonable response is

at N19 so we have to check if N19 is better than P17. Let's read a few moves deep :

$$Bcm5 Variation 1.3.2.1.1. White is split but alive on both sides

$$ ----------------------------+

$$ . . . . 2 . 6 1 O X . . . . |

$$ O 5 . X O O O 4 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm5 Variation 1.3.2.1.1. White is split but alive on both sides

$$ ----------------------------+

$$ . . . . 2 . 6 1 O X . . . . |

$$ O 5 . X O O O 4 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

That's even better.

Let's try

at

.

$$Bcm5 Variation 1.3.2.1.2.1. White is dead, game over.

$$ ----------------------------+

$$ . . . 4 2 . 3 1 O X . . . . |

$$ O . . X O O O 6 O X O O O . |

$$ . . . X X X X O O 5 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm5 Variation 1.3.2.1.2.1. White is dead, game over.

$$ ----------------------------+

$$ . . . 4 2 . 3 1 O X . . . . |

$$ O . . X O O O 6 O X O O O . |

$$ . . . X X X X O O 5 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

(Of course White won't play

there, LZ prefers to tenuki. Saving 5 stones in gote is not interesting right now.)

But perhaps we could try

at H18?

$$Bcm5 Variation 1.3.2.1.2.2.1 Very good for Black

$$ ----------------------------+

$$ . . 6 4 2 . 3 1 O X . . . . |

$$ O . 5 X O O O . O X O O O . |

$$ . . . X X X X O O 7 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm5 Variation 1.3.2.1.2.2.1 Very good for Black

$$ ----------------------------+

$$ . . 6 4 2 . 3 1 O X . . . . |

$$ O . 5 X O O O . O X O O O . |

$$ . . . X X X X O O 7 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

This doesn't prevent escaping but is very good for Black.

$$Bcm5 Variation 1.3.2.1.2.2.2 Roughly the same

$$ ----------------------------+

$$ . 7 6 4 2 . 3 1 O X . . . . |

$$ O 8 5 X O O O . O X O O O . |

$$ . . . X X X X O O 9 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm5 Variation 1.3.2.1.2.2.2 Roughly the same

$$ ----------------------------+

$$ . 7 6 4 2 . 3 1 O X . . . . |

$$ O 8 5 X O O O . O X O O O . |

$$ . . . X X X X O O 9 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Well, it seems that with

we can split White and in addition capture a few stones. But we didn't check other possibilities for

.

$$Bcm5 Variation 1.3.2.2

$$ ----------------------------+

$$ . . . . . . . 1 O X . . . . |

$$ O . . X O O O 2 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm5 Variation 1.3.2.2

$$ ----------------------------+

$$ . . . . . . . 1 O X . . . . |

$$ O . . X O O O 2 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

This looks so bad for White, so let's come back to variation 1.3.2.1. We said that

at M19 is good but can we do better, for instance with

at G18?

$$Bcm7 Variation 1.3.2.1.3.

$$ ----------------------------+

$$ . . . . O . . X O X . . . . |

$$ O 1 . X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm7 Variation 1.3.2.1.3.

$$ ----------------------------+

$$ . . . . O . . X O X . . . . |

$$ O 1 . X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

This looks better for White, so let's come back to variation 1.3.2. with

at J19.

$$Wcm6 Variation 1.3.2.3.

$$ ----------------------------+

$$ . . . 1 . . . X O X . . . . |

$$ O . . X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Wcm6 Variation 1.3.2.3.

$$ ----------------------------+

$$ . . . 1 . . . X O X . . . . |

$$ O . . X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Looks good for Black but we'll figure that out later. Let's continue first variation 1.3.2.1.3.

$$Bcm7 Variation 1.3.2.1.3., continued

$$ ----------------------------+

$$ . . . . O . 2 X O X . . . . |

$$ O 1 . X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm7 Variation 1.3.2.1.3., continued

$$ ----------------------------+

$$ . . . . O . 2 X O X . . . . |

$$ O 1 . X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Still looks good but not optimal for Black. Let's finish first variation 1.3.2.2.

$$Bcm5 Variation 1.3.2.2, continued:

$$ ----------------------------+

$$ . . . . 4 . . 1 O X . . . . |

$$ O . . X O O O 2 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm5 Variation 1.3.2.2, continued:

$$ ----------------------------+

$$ . . . . 4 . . 1 O X . . . . |

$$ O . . X O O O 2 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

White is dead, so variation 1.3.2.2. can be discarded, White won't play

there.

Let's come back to variation 1.3.2.1.3 and read it until the end.

$$Bcm7 Variation 1.3.2.1.3., continued

$$ ----------------------------+

$$ . . . . O . 2 X O X . . . . |

$$ O 1 . X O O O 4 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Bcm7 Variation 1.3.2.1.3., continued

$$ ----------------------------+

$$ . . . . O . 2 X O X . . . . |

$$ O 1 . X O O O 4 O X O O O . |

$$ . . . X X X X O O 3 X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

OK, we've seen that position already, it's good for Black but not the best variation, so we can forget that

. But let's come back to variation 1.3.2.3.

$$Wcm6 Variation 1.3.2.3., continued

$$ ----------------------------+

$$ . . . 1 . . . X O X . . . . |

$$ O . 2 X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Wcm6 Variation 1.3.2.3., continued

$$ ----------------------------+

$$ . . . 1 . . . X O X . . . . |

$$ O . 2 X O O O . O X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Very good for Black, White should tenuki and give up his stones for the moment.

OK, so now that we have done 98 playouts, why not try some silly move for

, just to check if we overlooked something?

$$Wcm2 Other choice for :w2:

$$ ----------------------------+

$$ . . . . . . . . 2 . . . . . |

$$ O . . X O O O . 1 X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |

- Click Here To Show Diagram Code

[go]$$Wcm2 Other choice for :w2:

$$ ----------------------------+

$$ . . . . . . . . 2 . . . . . |

$$ O . . X O O O . 1 X O O O . |

$$ . . . X X X X O O . X O . O |

$$ . O . . , . O X X X X X O . |

$$ O . . X O . X O . . . X . . |[/go]

Oh no, that's really silly, White is dead, forget it.

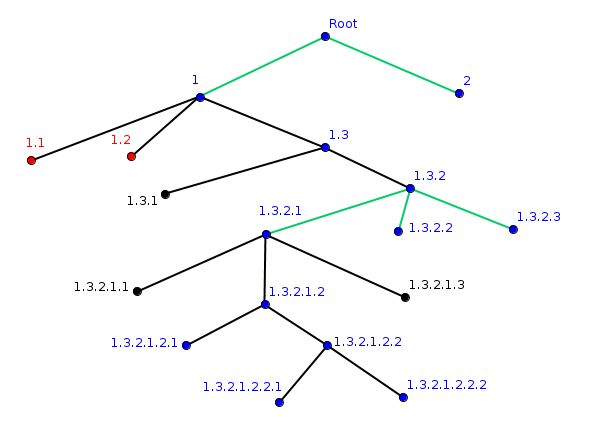

Conclusion: it seems that the exploration is mostly depth first, from left to right, but LZ sometimes comes back to an earlier branch and reads a few moves further from there.

Here is the tree. Black edges represent a sequence starting with Black,

green edges a sequence starting with White.

A

blue vertex is a very good position for Black, a black vertex is slightly good for black, and a

red vertex is bad for Black.

- tree.png (21.45 KiB) Viewed 9978 times